The average person tends to think of translation and interpreting as essentially the same thing. If you can speak, read, and write in two languages, you should be able to translate as well as you interpret, and vice versa. Those of us in the language-service industry know better. We understand how different these professions can be, and we recognize that the skills that make a great interpreter or a great translator do not always overlap. It is the exceptional language professional who is able to excel in both fields.

But it may very well be because most people don’t understand these differences that we are currently witnessing a technologically driven convergence in translation and interpreting.

This convergence is happening through developments in artificial intelligence, voice recognition, deep learning, big data, and neural networks. It is creeping into our daily lives through AI assistants like Siri, Cortana, and Alexa. It is being made possible by recent advances in decades-old technology.

Anyone with a smartphone has experienced the leap forward that voice recognition has made in recent years. After plateauing at an accuracy rate of around 75% for what seemed to be its achievable upper limit, in just a few short years it has pushed through to near-human equivalence, recognizing upwards of 95% (or more) of speech. These improvements are what have made Siri, Cortana, and Alexa possible.

They are also responsible for many of the shifts in the way we think about translation and interpreting technology.

Take “Skype Translator”, for instance. Its very name, at first blush, seems to be a misnomer. It is being developed to enable near real-time conversation using voice-over-internet-protocol (VoiP) software. In other words, it is interpreting technology. The ultimate goal is for a person to be able to speak a source language using Skype, while another person seamlessly hears the speech converted into a target language. And it’s already happening. Though far from perfect, it’s getting there. And the really significant point is that Microsoft is relying on the convergence of translation and interpreting technology to make it possible.

Skype’s voice-recognition technology is capturing the speaker’s speech and converting it to text. That text is then being processed using both statistical machine translation, and more recently, neural network translation. The “translated” text is then “interpreted” by computer-generated speech synthesis in another language. Translation and interpreting, all-in-one.

In short, we’re already living in the future.

There are multiple explanations for why these advances are happening now. One of the most important factors is computing power (and speed). Another is the abundance of data being produced, processed, and analyzed at a rate and in a manner never before seen. And a third, of particular significance to language services, is the understanding and use of neural networks.

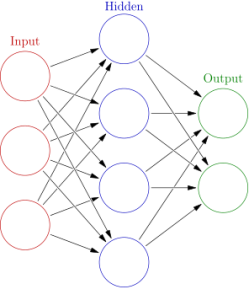

A neural network, in this context, refers to computer-based information processing that models the way in which our brain solves problems. There are inputs and outputs, and processing in between. The network “learns” as more information is fed into it, improving problem-solving and resulting in better outputs. Unlike a conventional computer program, which does the same thing over and over again (in the manner “programmed”), a recurrent neural network gets better with every new piece of information added to it, on a trial-and-error basis.

So every time that an incorrect translation is produced, it learns not to repeat that output. And every time that a correct translation happens, that successful output is fed back into the system, making it a more likely outcome the next time those same words appear.

Glosser.ca – Own work, Derivative of File:Artificial neural network.svg, CC BY-SA 3.0, https://commons.wikimedia.org/w/index.php?curid=24913461

Recurrent neural networks require data, lots of data. And in the case of translation, this means quality parallel corpora, which, for the most part, have been prepared by professional human translators. The more high-quality translations there are between two languages (inputs), the more the system can learn (artificial intelligence), and the better the network-generated translation (output) will be. This is already the case with statistical machine translation, which relies on probabilities that a given translation will be correct. A recurrent neural network then takes the next step by learning what is acceptable and unacceptable outputs, and adjusting future translations accordingly.

Obviously, we’re just skimming the surface here. There is a lot more to neural networks and the way in which they’re being employed in language technology. But these systems represent both the present and the future.

So, where does that leave professional translators and interpreters?

First, we need to recognize the forces shaping our industry and understand what they can do right now, and what they will likely be able to accomplish in the near future. Second, we need to be aware that human translators and interpreters are still very much part of this process and will continue to be in the future. Our roles, however, have already begun to shift.

There is, and will continue to be, a need for “premium” translators and interpreters. Premium, in this context, means highly qualified language specialists with advanced degrees and international experience that enable them to provide high-level translation and interpreting services. These language professionals will do work that requires nuance, creativity, confidentiality, and accuracy. It will tend to be subject-specific and involve deep knowledge of a particular field or fields, such as law, finance, government, biotechnology, medicine, or engineering, to name just a few.

There will also be a host of new language-technology related jobs. These include translation-technology analysts, machine-learning experts, terminologists, language data collectors/curators, semantic analysts, corpus linguists, computational linguists, and translation quality assessors. In addition, there will be evolving versions of editors, proofreaders, and quality-assurance analysts.

The low-hanging fruit of our industry is already being picked. Translation and interpreting that involve a good deal of repetition, with consistent terminology, are now being done by software; as are services that involve a volume of work beyond human capacity (such as translating hundreds of thousands words a day) or in emergency/crisis situations, where imperfect bilingual interaction is more important than flawless communications.

Language services are going high-tech and will only continue to become more technologically dependent. As a result, in order to carve out a niche in this reality, language professionals will need to become increasingly tech savvy, in addition to being experts in their language pairs.

===

Source:

“Achieving Human Parity in Conversational Speech Recognition”, by W. Xiong, J. Droppo, X. Huang, F. Seide, M. Seltzer, A. Stolcke, D. Yu, G. Zweig. https://arxiv.org/abs/1610.05256